Platform Architecture

Future-Ready Platform

- Composable by design

- Dynamic architecture

- Custom logic via lambdas

- Independent scaling

- Faster releases, lower risk

- Future-proof foundation

Simple explanation

We keep the platform strong at the center and flexible at the edges. Core services stay reusable, optional modules come in only when needed, and lambdas capture the business rules that change fastest - so clients get a platform that feels tailored, while you keep an architecture that still scales operationally. This is also the cleaner answer to the trade-off: microservices create agility, but only if complexity is managed deliberately.

flowchart LR

A[Client requirements] --> B[Shared core services]

B --> C{Optional component needed?}

C -- Yes --> D[Attach component]

C -- No --> E[Skip component]

D --> F{Custom business rule?}

E --> F

F -- Yes --> G[Invoke custom lambda]

F -- No --> H[Use standard service flow]

G --> I[Event bus and telemetry]

H --> I

I --> J{Requirements change?}

J -- Add --> D

J -- Remove --> K[Detach component]

K --> IThis lifecycle reflects the architectural logic behind independent service deployment, API-gateway mediation, service discovery, event-driven decoupling, and zero-downtime configuration updates.

Composable Microservices for Client-Specific Growth

Technical Note

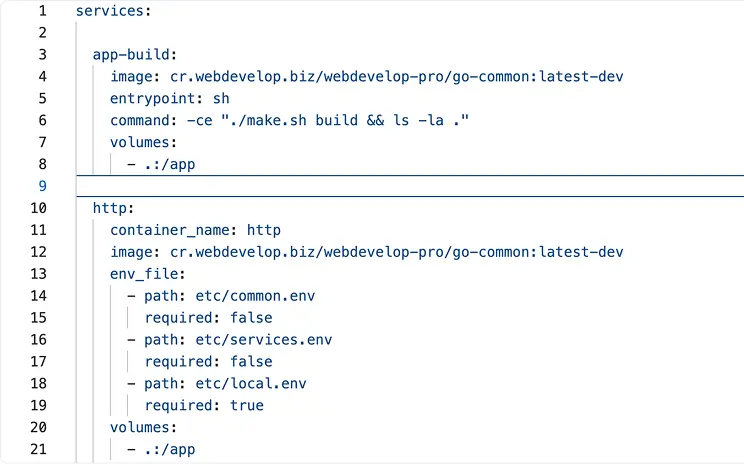

Implementation pattern:

- Expose a single API gateway as the stable front door for routing, aggregation, authentication, and throttling.

- Let internal services find one another through service discovery or DNS-based service names; use an event bus or messaging layer for asynchronous reactions.

- Apply serverless functions to handle custom, bursty, or workflow-driven logic.

- Gateways reduce client coupling, event-driven patterns decouple producers from consumers.

- Serverless functions reduce infrastructure overhead while scaling on demand.

Comparison Table

The comparison below is a directional synthesis of official vendor guidance and recent cloud-native analysis. It compares operating models rather than benchmarked pricing, so the flexibility, speed, and cost ratings should be read as strategic tendencies, not absolutes.

| Approach | Flexibility | Deployment speed | Customization | Cost profile |

|---|---|---|---|---|

| Our composable microservices + custom lambdas | Very high - optional components can be enabled, omitted, or replaced per client | High - independent releases plus on-demand extensions | Very high - client-specific logic lives in lambdas, not core forks | Efficient for variable demand - optional features and serverless extensions avoid unnecessary always-on spend |

| Monolith | Low - one codebase, tighter coupling, broader change impact | Low to medium - releases tend to move together | Medium - customization often becomes branching or code debt | Can look cheap early, but scaling and change become expensive |

| Traditional microservices | High - service-level change is possible, but the estate is often fixed | Medium - faster than monoliths, but more operational coordination | High - but customization often adds service sprawl or heavier platform overhead | Often higher ops overhead - more moving parts, observability, discovery, and deployment complexity |

The commercial advantage of your model is that it aims to keep the option value of decomposition without forcing every client into the same fixed service footprint. That is consistent with recent CNCF commentary on business optionality and with guidance that serverless patterns can reduce operational weight in microservice environments.